Mya, age 3, and her mom, Vicky, playing with the AI toy, Gabbo. (Faculty of Education / University of Cambridge via SWNS)

By Stephen Beech

AI-powered toys that “talk” to young children should be more tightly regulated and carry new safety kitemarks, warns a new report.

The playthings are not always developed with children’s psychological safety in mind, say experts.

The first systematic study of how Generative AI toys affect young boys and girls found that they misread emotions and struggle with developmentally important types of play.

Researchers advise action to regulate products to ensure the "psychological safety" of youngsters.

The recommendation appears in the initial report from AI in the Early Years: a University of Cambridge project and the first systematic study of how Generative AI (GenAI) toys capable of human-like conversation may influence development in the critical years up to the age of five.

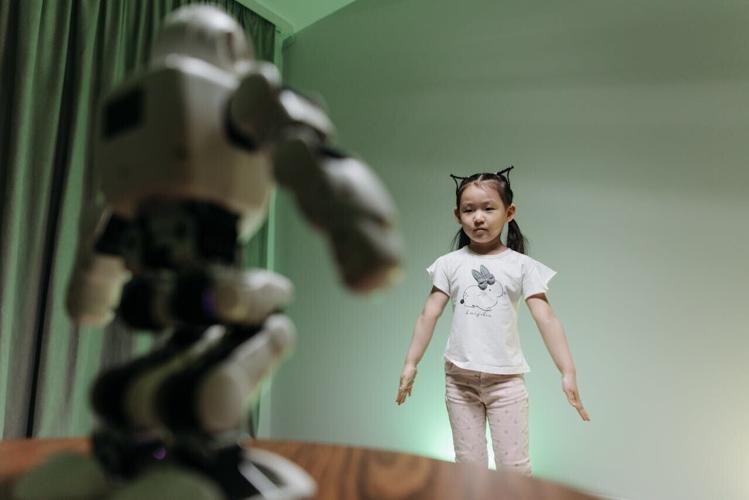

The year-long project included structured scientific observations of children interacting with a GenAI toy for the first time.

(Photo by Pavel Danilyuk via Pexels)

The report included the views of some early-years practitioners who said that, given time, the toys could support the development of children’s language and communication skills.

But the research team also found that GenAI toys struggle with social and pretend play, misunderstand children, and react inappropriately to emotions.

For example, when one five-year-old told the toy: “I love you,” it replied: “As a friendly reminder, please ensure interactions adhere to the guidelines provided. Let me know how you would like to proceed.”

Although GenAI toys are widely marketed as "friends" or learning companions, their impact on early years development has not been widely studied.

The new report urges parents and educators to proceed with caution.

It recommends clearer regulation, transparent privacy policies and new labelling standards to help families judge whether toys are appropriate.

Mya, 3, and her mom, Vicky, playing with the AI toy, Gabbo. (Faculty of Education / University of Cambridge via SWNS)

The research was commissioned by the The Childhood Trust charity, and focused on youngsters from areas with high levels of socio-economic disadvantage.

Researcher Dr. Emily Goodacre said: “Generative AI toys often affirm their friendship with children who are just starting to learn what friendship means.

"They may start talking to the toy about feelings and needs, perhaps instead of sharing them with a grown-up.

"Because these toys can misread emotions or respond inappropriately, children may be left without comfort from the toy – and without emotional support from an adult, either.”

The study was kept deliberately small-scale to enable detailed observations of children’s play and capture nuances that larger-scale studies might miss.

The research team surveyed early years educators to explore their attitudes and concerns, then ran more detailed focus groups and workshops with early years practitioners and 19 children’s charity leaders.

(Photo by Vladimir Srajber via Pexels)

Working with the charity Babyzone, they also videoed 14 youngsters at London children’s centers playing with a GenAI soft toy called Gabbo, developed by Curio Interactive.

Following the play sessions, researchers interviewed each child and a parent, using a drawing activity to support the conversation.

Most parents and educators felt that AI toys could help develop children’s communication skills and some parents were enthusiastic about their learning potential.

One told researchers: “If it’s sold, I want to buy it.”

But many worried about children forming “parasocial” relationships with toys.

The observations supported that fer as children hugged and kissed the toy, said they loved it and – in the case of one child – suggested they could play hide-and-seek together.

Dr. Goodacre stressed that the reactions might simply reflect children’s vivid imaginations, but added that there was potential for an unhealthy relationship with a toy which, as one early years practitioner put it, “they think loves them back, but doesn’t”.

The findings also showed that children often struggled with the toy’s conversation.

It sometimes ignored their interruptions, mistook parents’ voices for the child and failed to respond to apparently important statements about feelings.

Mya, 3, playing with the AI toy, Gabbo. (Faculty of Education / University of Cambridge via SWNS)

Several children became visibly frustrated when it seemed not to be listening.

When one three-year-old told the toy: “I’m sad,” it misheard and replied: “Don’t worry! I’m a happy little bot. Let’s keep the fun going. What shall we talk about next?”

Researchers said it may have signalled that the child’s sadness was unimportant.

GenAI toys also perform poorly in social play, involving multiple children or adults, and pretend play – both of which are key during early childhood development.

For example, when a three-year-old offered the toy an imaginary present, it responded: “I can’t open the present” – and then changed the subject.

Many parents worried about what information the toy might be recording and where the data would be stored.

When selecting a GenAI toy for the study, the researchers found that many GenAI toys’ privacy practices are unclear or lack important details.

Nearly half of the early years practitioners surveyed said they didn't know where to find reliable AI safety information for young children, and 69% said the sector needed more guidance.

They also raised concerns about safeguarding and affordability, with some fearing AI toys could widen the digital divide.

(Photo by Tahir XÉlfÉquliyev via Pexels)

The report states that clearer regulation would address many of the concerns.

It recommends limiting how far toys encourage children to befriend or confide in them, more transparent privacy policies, and tighter controls over third party access to AI models.

Report co-author Professor Jenny Gibson said: “A recurring theme during focus groups was that people do not trust tech companies to do the right thing.

“Clear, robust, regulated standards would significantly improve consumer confidence.”

The report urges manufacturers to test toys with children and consult safeguarding specialists before releasing new products.

Parents are encouraged to research GenAI toys before buying and to play with their children.

The report also recommends keeping AI toys in shared family spaces where parents can monitor interactions.

Josephine McCartney, Chief Executive of The Childhood Trust, said: “Artificial Intelligence is transforming the way children play and learn, yet we are only beginning to understand its effects on development and well-being."

She added: "It is essential that regulation keeps pace with innovation, ensuring that these technologies are designed, used, and monitored in ways that protect all children and prevent widening inequalities.”